Change Data Capture - Streaming Database Changes to Your Data Lake

From Binlogs to Bronze Tables: Production Patterns for Debezium, Flink CDC, and AWS DMS

Change Data Capture (CDC) is how mature data platforms maintain real-time synchronization between operational databases and analytical systems. Instead of running expensive full-table extracts every night, CDC captures individual row-level changes as they happen, all inserts, updates, and deletes, and streams them to your data lake within seconds.

After implementing CDC pipelines at multiple companies, I’ve learned that the technology choice matters less than understanding the underlying mechanics. Whether you use Debezium, Flink CDC, or a managed service like AWS DMS, the patterns and pitfalls are remarkably consistent.

Today we’ll cover:

How CDC actually works — transaction logs, logical replication, and change events

Debezium deep dive — production configuration with exactly-once semantics (v3.3)

Flink CDC pipelines — YAML-based streaming integration (v3.5)

AWS DMS Serverless — managed CDC for AWS-native architectures

Lakehouse CDC — Delta Lake CDF and Iceberg incremental reads

Production patterns — initial loads, schema evolution, and ordering guarantees

Security & compliance — PII masking, encryption, and audit trails

Migration playbook — moving from batch ETL to CDC

1. How CDC Actually Works

Change Data Capture (CDC) is a process that identifies and captures only the changes (inserts, updates, deletes) in a source database or application, delivering these updates in near real-time to a target system, making data integration efficient, fast, and enabling real-time analytics, replication, and data warehousing without bulky full data loads. It's a highly effective method for keeping data synchronized across systems, powering analytics, and supporting modern, low-latency data pipelines.

Every production database maintains a transaction log - a sequential record of every change. CDC tools read these logs and convert them into structured change events.

The Transaction Log Foundation

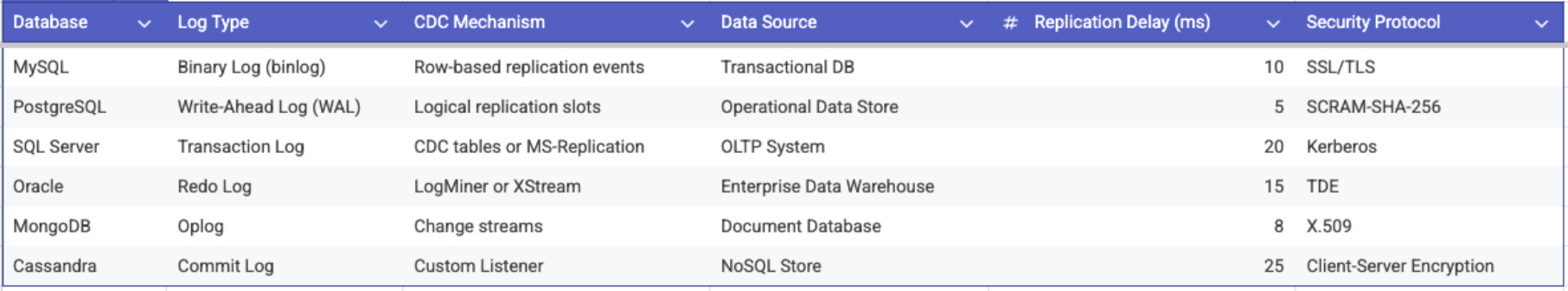

Different databases expose their logs differently:

The key insight: CDC doesn’t query your tables repeatedly. It reads the log that the database was already writing anyway, making it extremely low-overhead on the source system.

Anatomy of a Change Event

A typical CDC event contains:

{

"before": { "id": 1001, "status": "pending", "amount": 99.99 },

"after": { "id": 1001, "status": "shipped", "amount": 99.99 },

"source": {

"version": "3.3.0.Final",

"connector": "mysql",

"ts_ms": 1706025600000,

"db": "orders",

"table": "order_items",

"server_id": 12345,

"file": "mysql-bin.000042",

"pos": 15847293

},

"op": "u",

"ts_ms": 1706025600123

}The op field tells you the operation type: c (create/insert), u (update), d (delete), or r (read which is used during initial snapshots). The before and after fields give you the full row state, enabling downstream systems to apply changes correctly.